Self-service analytics implementation POC: Databricks Genie

Self-service analytics implementation POC: Databricks Genie

Self-service analytics implementation projects often fail because they treat the symptom instead of the disease. Organizations deploy dashboards, build reports, and give users access to data, then wonder why the data team's backlog keeps growing.

The real problem isn't access. It's dependency.

Tenjumps recently completed a 30-day proof of concept evaluating whether self-service analytics implementation using Databricks AI/BI Genie could eliminate analyst dependency for the largest independently owned logistics provider in the USA.

The challenge: business users had questions, data analysts had backlogs, and every follow-up question meant another ticket.

Arvind Divakar, Data Engineer at Tenjumps, led the technical POC. The existing Snowflake and Power BI infrastructure handled structured reporting well. Dashboards displayed operational KPIs correctly. But the moment someone needed to understand why a metric changed or wanted to compare specific segments, the request went back to the data team.

This case study breaks down the 30-day POC, evaluating whether natural language analytics could enable business users to ask follow-up questions without analyst support.

The problem: Can dashboards become conversational?

The logistics provider had invested in business intelligence infrastructure. Snowflake handled data warehousing. Power BI delivered operational dashboards. KPIs displayed correctly. Filters worked. Leadership got the reports they expected.

The POC was initiated to evaluate whether business users could interact with data using natural language and whether dashboards could become conversational rather than static.

The project objective stated clearly: demonstrate whether business users can "talk to the data" directly using natural language, whether dashboards can become interactive and conversational, and whether ad hoc analytical questions can be answered within the same interface.

The existing setup answered what happened. Understanding why required analyst intervention. When executives reviewed performance metrics, follow-up questions emerged that existing dashboards couldn't answer.

The POC tested whether AI/BI Genie could bridge this gap.

The POC approach: Intelligence layer, not replacement

Tenjumps designed the POC around three core principles:

Snowflake remains the source of truth

No data duplication or migration

AI capabilities operate within governed datasets

Rather than replacing the existing BI stack, this approach tested adding an intelligence layer on top of it.

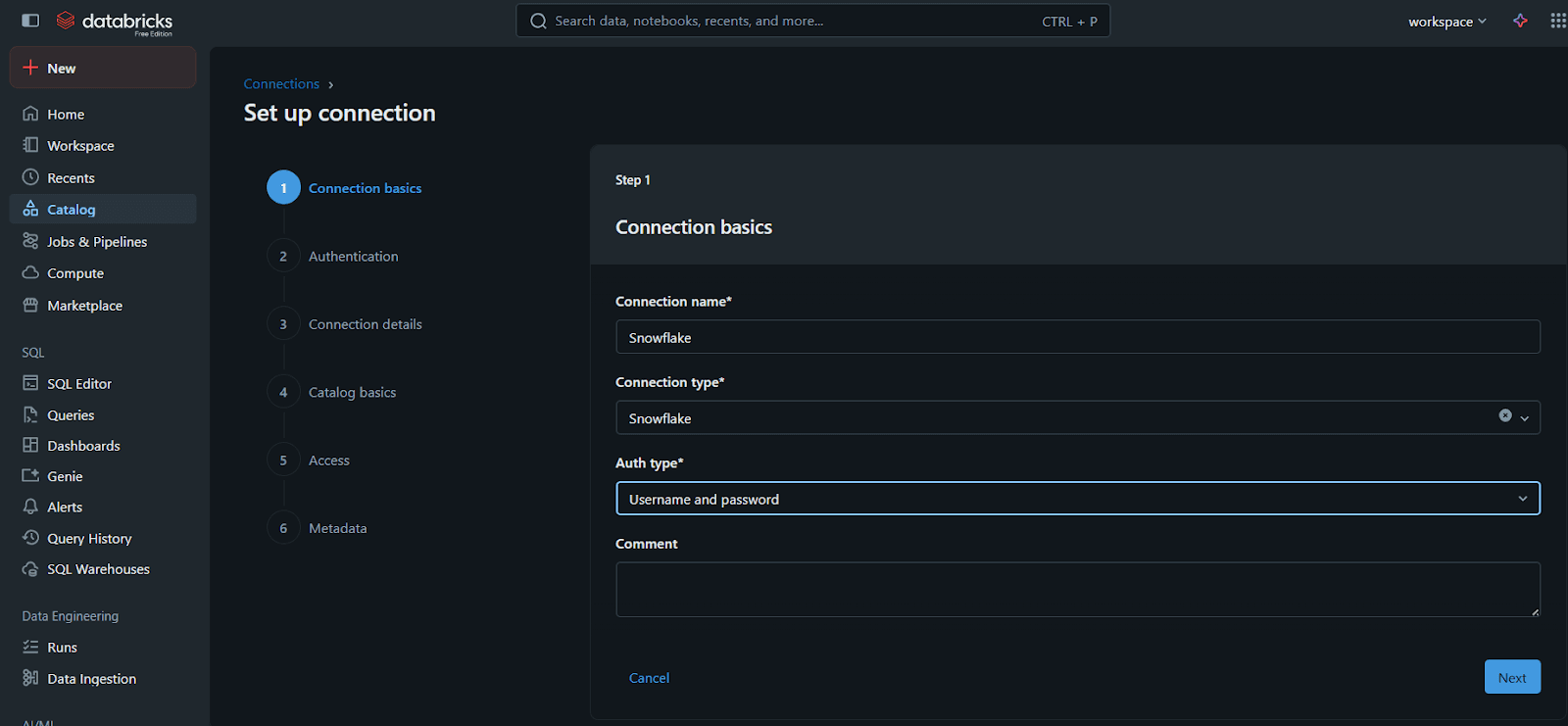

POC architecture: Snowflake federation

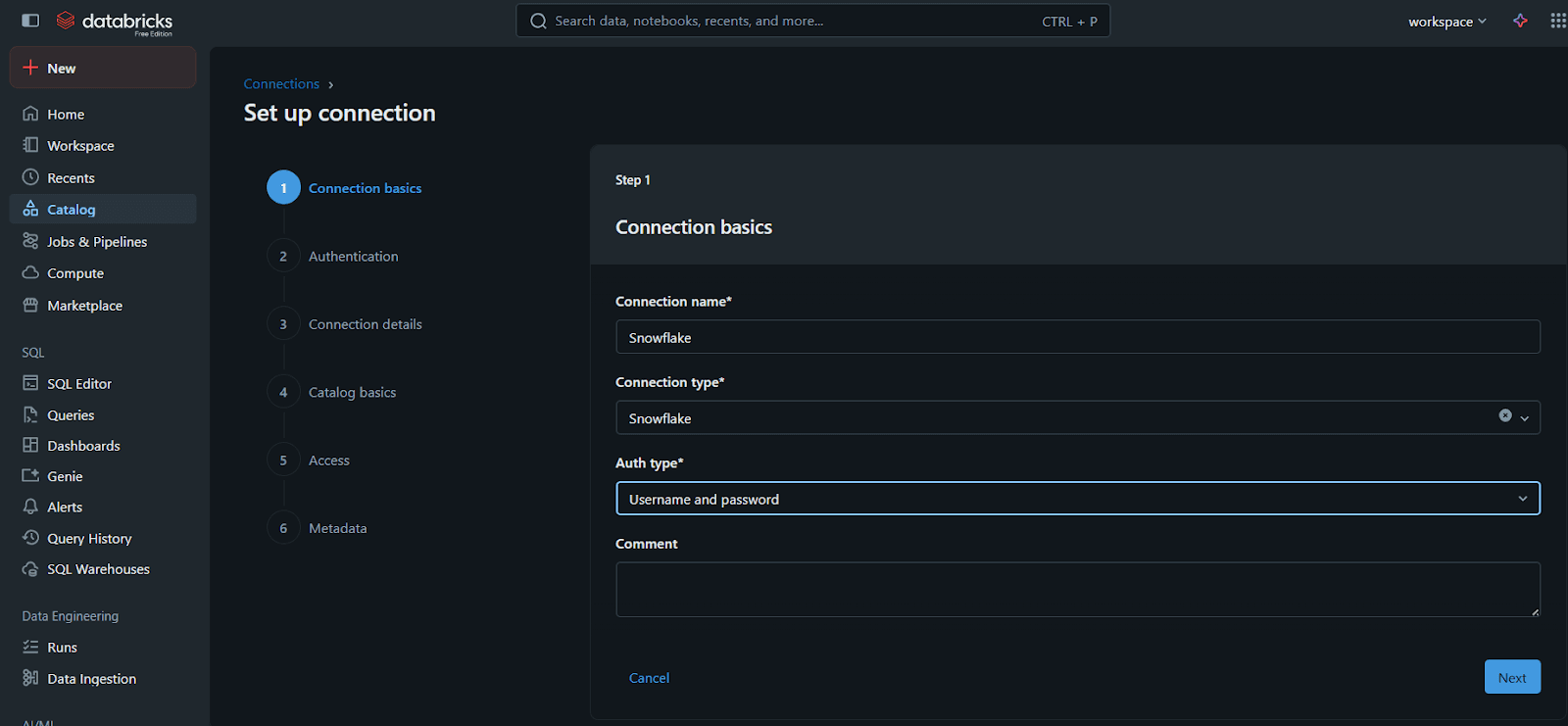

The POC established a federation connection between Databricks and Snowflake using Unity Catalog.

Databricks queries Snowflake tables directly through federation without moving data. No duplication, no sync issues, no migration risk. Snowflake remains the source of truth.

This approach meant:

Zero data governance changes required

No disruption to existing Power BI dashboards

Snowflake continues as the operational data warehouse

Databricks adds the intelligence layer for testing

The federation connection configuration took one day. Snowflake tables became immediately queryable inside Databricks SQL Warehouse without replication.

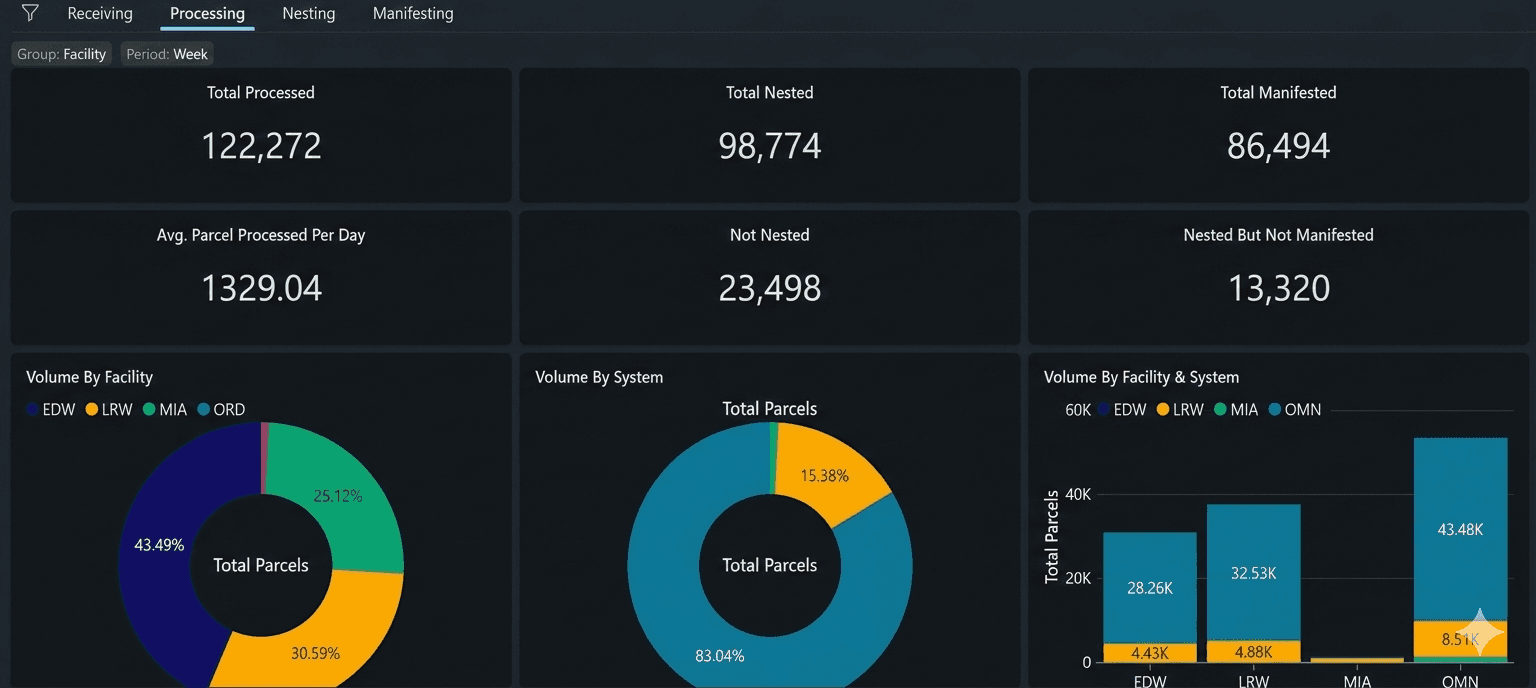

POC implementation: Recreating dashboards in Databricks

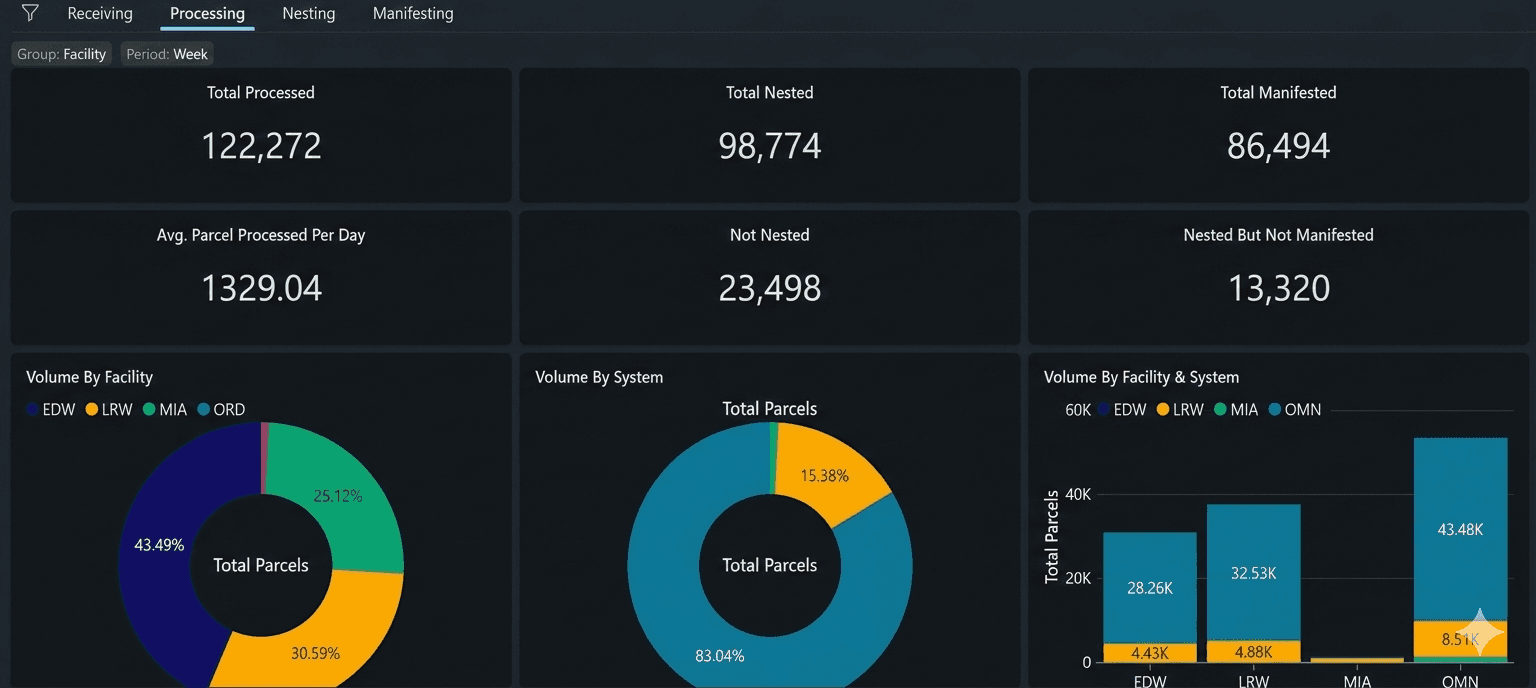

The POC focused on recreating operational dashboards within Databricks to maintain reporting parity, then testing AI/BI Genie integration.

Dashboard recreation with validated metrics

Tenjumps rebuilt core operational dashboards to ensure the POC operated on the same data and business logic as existing reports.

Key metrics recreated:

Processed parcel count

Nested count

Manifested count

Transit time metrics by route

Exception counts by type and vendor

Vendor-level performance comparisons

Facility-level distribution

Every calculation was validated against Power BI to ensure metric consistency. Row counts, aggregations, date logic all had to match exactly.

Interactive filters replicated the existing user experience:

Date range selection

Facility filtering

Vendor breakdown

Business name segmentation

Country-specific views

The visual layer mirrored Power BI's structure using trend charts, distribution visualizations, KPI cards, and tabular drilldowns.

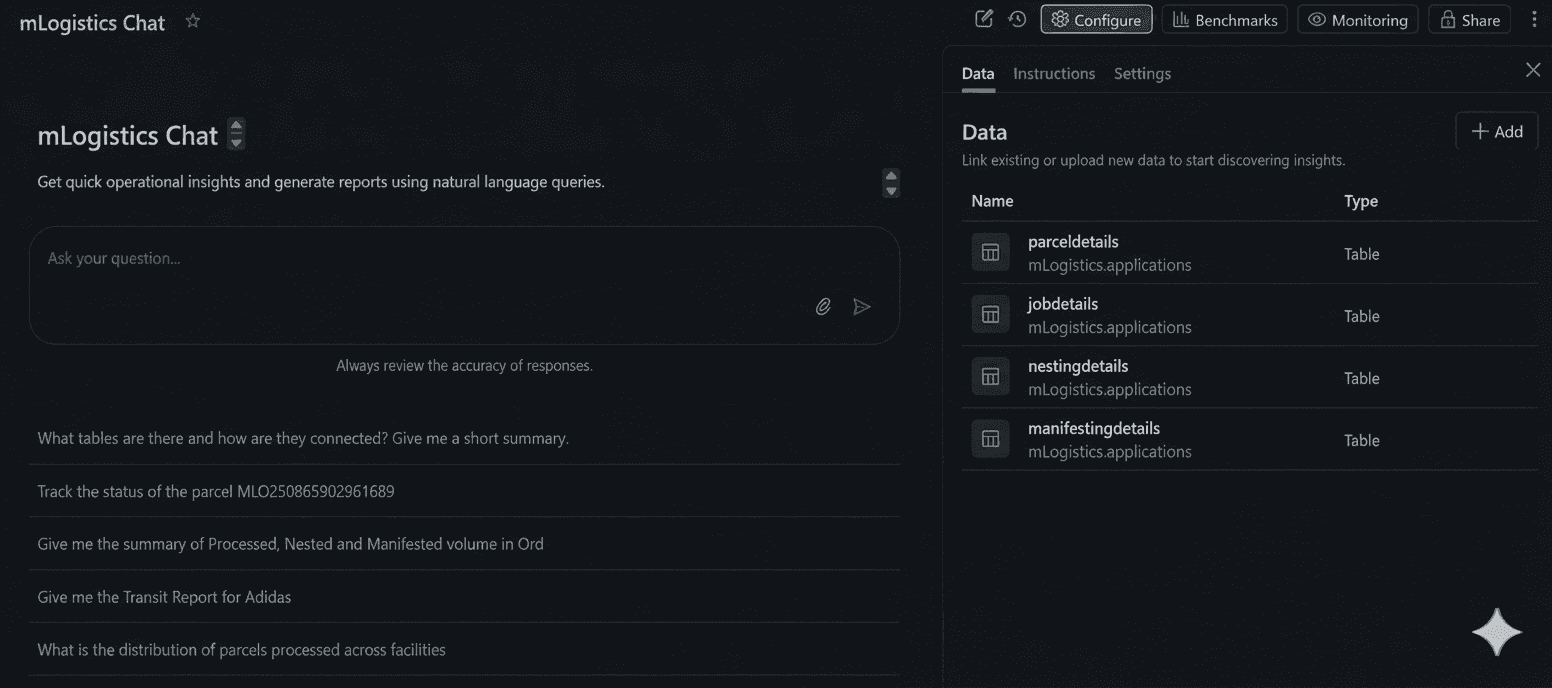

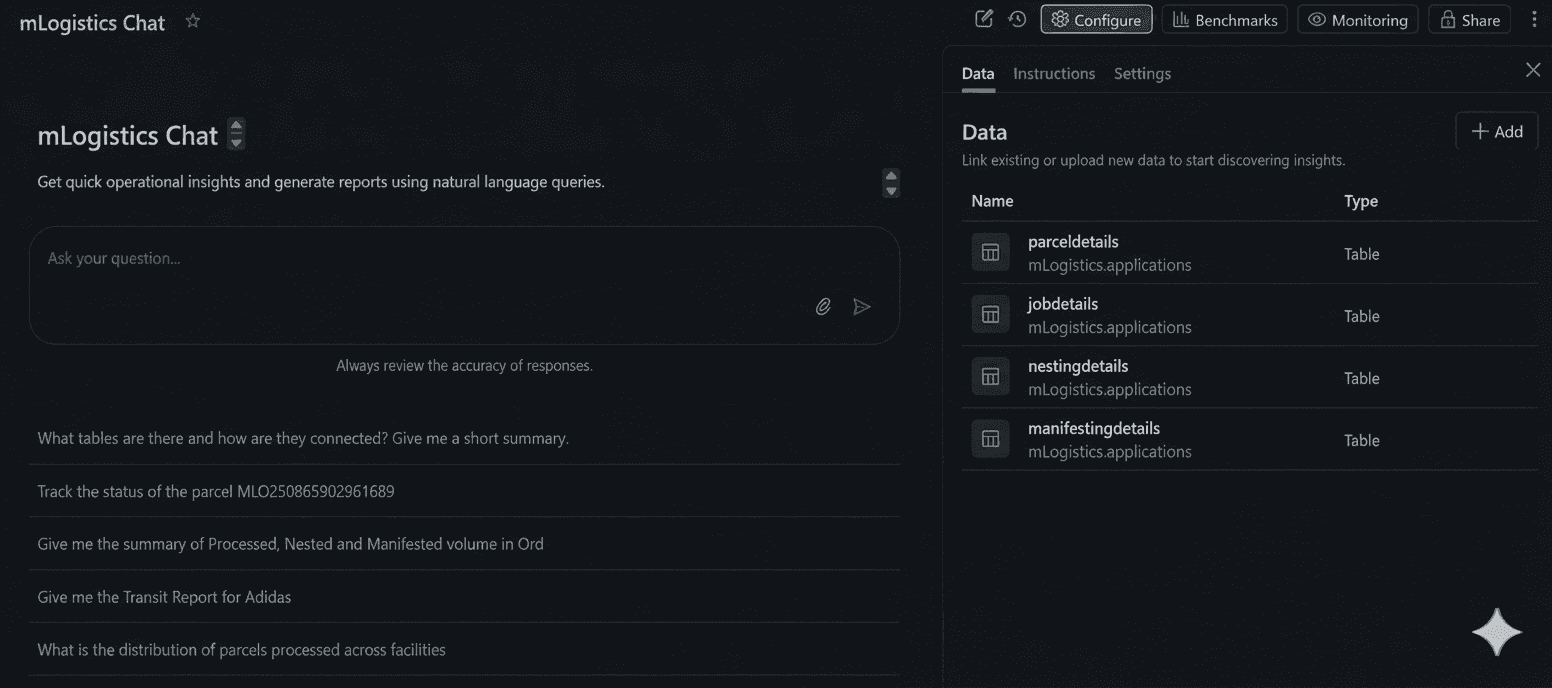

Configuring AI/BI Genie for natural language queries

Once dashboards matched existing reporting, the POC moved to testing AI/BI Genie capabilities.

A dedicated Genie Space was created and linked directly to the validated operational dataset. This ensured Genie operated on the same governed data model used for structured reporting.

The configuration required adding business descriptions to key fields:

What each KPI represents

How calculations are derived

What time-based fields mean

Differences between processed, nested, and manifested statuses

This semantic layer proved critical for the POC. Genie's ability to generate correct SQL depends heavily on understanding business context, not just table schemas.

Testing with operational business questions

The POC tested Genie against realistic business questions:

Week-over-week transit time comparisons

Vendor-level exception breakdowns

Facility-specific processing trends

Parcel-level drilldowns

Month-over-month performance summaries

For each prompt, the POC validated that Genie generated SQL matching expected Snowflake logic. Results were cross-validated with dashboard metrics to confirm consistency.

Iterative refinement during POC

Testing revealed areas where configuration improved Genie's accuracy:

Clarifying metric definitions to match business terminology

Refining column descriptions to eliminate ambiguity

Reducing technical field names in favor of operational language

Aligning naming conventions with how users actually describe data

The POC demonstrated that semantic clarity directly impacts natural language query accuracy. Configuration was optimized for conversational business language rather than database schemas.

POC result: Embedded conversational analytics

The POC validated that Genie could be embedded directly within the Databricks dashboard interface. This allowed testing whether users could ask follow-up questions while viewing KPI visuals without switching applications.

The integration meant structured reporting and conversational analytics operated within the same governed environment.

Example interaction patterns the POC tested:

Dashboard shows exception rate trends → Test querying vendor-specific breakdowns

Dashboard shows transit time metrics → Test country-by-country comparisons

Dashboard shows facility processing volume → Test identifying above-average performers

The POC demonstrated that natural language queries could answer exploratory questions without analyst intervention while maintaining governed access to validated data.

What the POC demonstrated

The 30-day proof of concept validated the feasibility of natural language-driven self-service analytics.

Analyst dependency can be eliminated

The POC proved that follow-up questions requiring analyst intervention can be answered directly by business users through natural language queries. Users can explore data independently without submitting tickets for custom analysis.

Decision cycles accelerate significantly

The POC showed time from question to insight dropping from hours or days to seconds. Users accessing dashboards can immediately drill into anomalies without waiting for custom reports.

Data governance remains intact

The POC confirmed all queries execute against the same governed Snowflake dataset used for structured reporting. No parallel data marts. No ungoverned analysis. Adding conversational capabilities does not compromise data integrity.

Existing infrastructure stays functional

The POC demonstrated that Power BI dashboards can continue operating while Databricks adds conversational capabilities. Snowflake remains the data warehouse. The approach adds intelligence without replacing functional infrastructure.

Technical considerations validated by POC

The POC validated several technical approaches for self-service analytics implementation.

Federation eliminates migration risk

Snowflake-Databricks federation through Unity Catalog proved effective for enabling conversational analytics without data movement. This approach reduces implementation risk and maintains existing data governance structures.

The POC showed federation works well when:

Source system governance is strong

Migration risk outweighs migration benefits

Existing BI infrastructure remains functional

Goal is adding intelligence, not replacing platforms

Semantic layer quality determines accuracy

The POC confirmed that natural language query accuracy depends directly on semantic clarity. Time invested in business descriptions, metric definitions, and terminology alignment significantly improves Genie response quality.

The quality of business context descriptions directly impacts how well natural language queries map to correct SQL. This configuration work proved essential during the POC.

Iterative refinement improves results

The POC validated that Genie configuration improves based on testing patterns. Initial deployment focused on core operational metrics. As test questions were asked, additional context and descriptions improved accuracy.

Self-service analytics implementation is not a one-time configuration. The POC showed it evolves based on usage patterns and feedback.

Governed self-service is achievable

The POC demonstrated that self-service analytics can balance user freedom with data governance by:

Limiting Genie access to validated, governed datasets

Executing all queries through the same SQL warehouse as dashboards

Maintaining role-based access controls

Auditing queries for compliance and usage patterns

POC timeline: 30 days from setup to validation

The POC timeline from federation setup to validated results demonstrates feasibility for mid-market organizations.

Key success factors identified:

Strong existing data foundation (Snowflake provided clean, governed data)

Clear business metrics already defined in current BI tools

Defined use cases for natural language interaction

Commitment to semantic layer development

Willingness to iterate based on testing

The POC was not a multi-quarter transformation. Building on what existed, adding intelligence where it mattered, and validating the approach in weeks proved achievable.

The POC followed Tenjumps' BEM delivery model: Explore (assess current state), Engage (design the solution), Execute (build in focused sprints), Evolve (refine based on results).

For organizations evaluating similar approaches, the POC validated this path:

Establish federation to avoid migration risk

Recreate core dashboards in Databricks to validate parity

Configure Genie with strong semantic layer

Test with real business questions

Refine based on accuracy

Validate feasibility for production deployment

What the POC proved about self-service analytics

The proof of concept validated that self-service analytics succeeds when business users get answers to their questions without requiring data team intervention.

Dashboards provide visibility. Natural language provides understanding. The POC demonstrated the combination can eliminate the bottleneck that prevents true self-service.

The POC showed that self-service analytics means:

Users review operational dashboards

Anomalies or trends trigger immediate questions

Natural language queries provide instant analysis

Decisions can happen in minutes instead of days

The difference between access and independence defines successful self-service analytics. Access means users can see data. Independence means users can ask why and get answers immediately.

The 30-day POC proved independence is achievable.

FAQs

What is a self-service analytics implementation POC?

A self-service analytics implementation POC (proof of concept) tests whether business users can query and analyze data independently using natural language tools like Databricks AI/BI Genie. The POC validates feasibility before full production deployment.

How long does a self-service analytics POC take?

POC timeline depends on existing data infrastructure. Tenjumps completed this self-service analytics POC in 30 days by using Snowflake-Databricks federation rather than data migration. Organizations with clean, governed data can achieve similar timelines.

Does a Databricks POC require migrating data from Snowflake?

No. Databricks can query Snowflake tables directly through Unity Catalog federation. This POC kept Snowflake as the source of truth without data duplication or migration risk, reducing POC complexity.

What is Databricks AI/BI Genie?

Databricks AI/BI Genie is a natural language query interface that allows business users to ask questions in plain English and receive SQL-generated answers. POCs test whether Genie can eliminate the need for users to write queries or understand database structures.

Can a self-service analytics POC run alongside existing Power BI dashboards?

Yes. This POC kept existing Power BI dashboards operational while testing Databricks AI/BI Genie capabilities. Self-service analytics POCs can validate new approaches without disrupting functional BI infrastructure.

What does a self-service analytics POC prove?

A self-service analytics POC validates whether natural language capabilities can reduce data analyst dependency. The POC tests whether business users can ask follow-up questions directly and get immediate answers through conversational interfaces operating on governed datasets.

Self-service analytics implementation projects often fail because they treat the symptom instead of the disease. Organizations deploy dashboards, build reports, and give users access to data, then wonder why the data team's backlog keeps growing.

The real problem isn't access. It's dependency.

Tenjumps recently completed a 30-day proof of concept evaluating whether self-service analytics implementation using Databricks AI/BI Genie could eliminate analyst dependency for the largest independently owned logistics provider in the USA.

The challenge: business users had questions, data analysts had backlogs, and every follow-up question meant another ticket.

Arvind Divakar, Data Engineer at Tenjumps, led the technical POC. The existing Snowflake and Power BI infrastructure handled structured reporting well. Dashboards displayed operational KPIs correctly. But the moment someone needed to understand why a metric changed or wanted to compare specific segments, the request went back to the data team.

This case study breaks down the 30-day POC, evaluating whether natural language analytics could enable business users to ask follow-up questions without analyst support.

The problem: Can dashboards become conversational?

The logistics provider had invested in business intelligence infrastructure. Snowflake handled data warehousing. Power BI delivered operational dashboards. KPIs displayed correctly. Filters worked. Leadership got the reports they expected.

The POC was initiated to evaluate whether business users could interact with data using natural language and whether dashboards could become conversational rather than static.

The project objective stated clearly: demonstrate whether business users can "talk to the data" directly using natural language, whether dashboards can become interactive and conversational, and whether ad hoc analytical questions can be answered within the same interface.

The existing setup answered what happened. Understanding why required analyst intervention. When executives reviewed performance metrics, follow-up questions emerged that existing dashboards couldn't answer.

The POC tested whether AI/BI Genie could bridge this gap.

The POC approach: Intelligence layer, not replacement

Tenjumps designed the POC around three core principles:

Snowflake remains the source of truth

No data duplication or migration

AI capabilities operate within governed datasets

Rather than replacing the existing BI stack, this approach tested adding an intelligence layer on top of it.

POC architecture: Snowflake federation

The POC established a federation connection between Databricks and Snowflake using Unity Catalog.

Databricks queries Snowflake tables directly through federation without moving data. No duplication, no sync issues, no migration risk. Snowflake remains the source of truth.

This approach meant:

Zero data governance changes required

No disruption to existing Power BI dashboards

Snowflake continues as the operational data warehouse

Databricks adds the intelligence layer for testing

The federation connection configuration took one day. Snowflake tables became immediately queryable inside Databricks SQL Warehouse without replication.

POC implementation: Recreating dashboards in Databricks

The POC focused on recreating operational dashboards within Databricks to maintain reporting parity, then testing AI/BI Genie integration.

Dashboard recreation with validated metrics

Tenjumps rebuilt core operational dashboards to ensure the POC operated on the same data and business logic as existing reports.

Key metrics recreated:

Processed parcel count

Nested count

Manifested count

Transit time metrics by route

Exception counts by type and vendor

Vendor-level performance comparisons

Facility-level distribution

Every calculation was validated against Power BI to ensure metric consistency. Row counts, aggregations, date logic all had to match exactly.

Interactive filters replicated the existing user experience:

Date range selection

Facility filtering

Vendor breakdown

Business name segmentation

Country-specific views

The visual layer mirrored Power BI's structure using trend charts, distribution visualizations, KPI cards, and tabular drilldowns.

Configuring AI/BI Genie for natural language queries

Once dashboards matched existing reporting, the POC moved to testing AI/BI Genie capabilities.

A dedicated Genie Space was created and linked directly to the validated operational dataset. This ensured Genie operated on the same governed data model used for structured reporting.

The configuration required adding business descriptions to key fields:

What each KPI represents

How calculations are derived

What time-based fields mean

Differences between processed, nested, and manifested statuses

This semantic layer proved critical for the POC. Genie's ability to generate correct SQL depends heavily on understanding business context, not just table schemas.

Testing with operational business questions

The POC tested Genie against realistic business questions:

Week-over-week transit time comparisons

Vendor-level exception breakdowns

Facility-specific processing trends

Parcel-level drilldowns

Month-over-month performance summaries

For each prompt, the POC validated that Genie generated SQL matching expected Snowflake logic. Results were cross-validated with dashboard metrics to confirm consistency.

Iterative refinement during POC

Testing revealed areas where configuration improved Genie's accuracy:

Clarifying metric definitions to match business terminology

Refining column descriptions to eliminate ambiguity

Reducing technical field names in favor of operational language

Aligning naming conventions with how users actually describe data

The POC demonstrated that semantic clarity directly impacts natural language query accuracy. Configuration was optimized for conversational business language rather than database schemas.

POC result: Embedded conversational analytics

The POC validated that Genie could be embedded directly within the Databricks dashboard interface. This allowed testing whether users could ask follow-up questions while viewing KPI visuals without switching applications.

The integration meant structured reporting and conversational analytics operated within the same governed environment.

Example interaction patterns the POC tested:

Dashboard shows exception rate trends → Test querying vendor-specific breakdowns

Dashboard shows transit time metrics → Test country-by-country comparisons

Dashboard shows facility processing volume → Test identifying above-average performers

The POC demonstrated that natural language queries could answer exploratory questions without analyst intervention while maintaining governed access to validated data.

What the POC demonstrated

The 30-day proof of concept validated the feasibility of natural language-driven self-service analytics.

Analyst dependency can be eliminated

The POC proved that follow-up questions requiring analyst intervention can be answered directly by business users through natural language queries. Users can explore data independently without submitting tickets for custom analysis.

Decision cycles accelerate significantly

The POC showed time from question to insight dropping from hours or days to seconds. Users accessing dashboards can immediately drill into anomalies without waiting for custom reports.

Data governance remains intact

The POC confirmed all queries execute against the same governed Snowflake dataset used for structured reporting. No parallel data marts. No ungoverned analysis. Adding conversational capabilities does not compromise data integrity.

Existing infrastructure stays functional

The POC demonstrated that Power BI dashboards can continue operating while Databricks adds conversational capabilities. Snowflake remains the data warehouse. The approach adds intelligence without replacing functional infrastructure.

Technical considerations validated by POC

The POC validated several technical approaches for self-service analytics implementation.

Federation eliminates migration risk

Snowflake-Databricks federation through Unity Catalog proved effective for enabling conversational analytics without data movement. This approach reduces implementation risk and maintains existing data governance structures.

The POC showed federation works well when:

Source system governance is strong

Migration risk outweighs migration benefits

Existing BI infrastructure remains functional

Goal is adding intelligence, not replacing platforms

Semantic layer quality determines accuracy

The POC confirmed that natural language query accuracy depends directly on semantic clarity. Time invested in business descriptions, metric definitions, and terminology alignment significantly improves Genie response quality.

The quality of business context descriptions directly impacts how well natural language queries map to correct SQL. This configuration work proved essential during the POC.

Iterative refinement improves results

The POC validated that Genie configuration improves based on testing patterns. Initial deployment focused on core operational metrics. As test questions were asked, additional context and descriptions improved accuracy.

Self-service analytics implementation is not a one-time configuration. The POC showed it evolves based on usage patterns and feedback.

Governed self-service is achievable

The POC demonstrated that self-service analytics can balance user freedom with data governance by:

Limiting Genie access to validated, governed datasets

Executing all queries through the same SQL warehouse as dashboards

Maintaining role-based access controls

Auditing queries for compliance and usage patterns

POC timeline: 30 days from setup to validation

The POC timeline from federation setup to validated results demonstrates feasibility for mid-market organizations.

Key success factors identified:

Strong existing data foundation (Snowflake provided clean, governed data)

Clear business metrics already defined in current BI tools

Defined use cases for natural language interaction

Commitment to semantic layer development

Willingness to iterate based on testing

The POC was not a multi-quarter transformation. Building on what existed, adding intelligence where it mattered, and validating the approach in weeks proved achievable.

The POC followed Tenjumps' BEM delivery model: Explore (assess current state), Engage (design the solution), Execute (build in focused sprints), Evolve (refine based on results).

For organizations evaluating similar approaches, the POC validated this path:

Establish federation to avoid migration risk

Recreate core dashboards in Databricks to validate parity

Configure Genie with strong semantic layer

Test with real business questions

Refine based on accuracy

Validate feasibility for production deployment

What the POC proved about self-service analytics

The proof of concept validated that self-service analytics succeeds when business users get answers to their questions without requiring data team intervention.

Dashboards provide visibility. Natural language provides understanding. The POC demonstrated the combination can eliminate the bottleneck that prevents true self-service.

The POC showed that self-service analytics means:

Users review operational dashboards

Anomalies or trends trigger immediate questions

Natural language queries provide instant analysis

Decisions can happen in minutes instead of days

The difference between access and independence defines successful self-service analytics. Access means users can see data. Independence means users can ask why and get answers immediately.

The 30-day POC proved independence is achievable.

FAQs

What is a self-service analytics implementation POC?

A self-service analytics implementation POC (proof of concept) tests whether business users can query and analyze data independently using natural language tools like Databricks AI/BI Genie. The POC validates feasibility before full production deployment.

How long does a self-service analytics POC take?

POC timeline depends on existing data infrastructure. Tenjumps completed this self-service analytics POC in 30 days by using Snowflake-Databricks federation rather than data migration. Organizations with clean, governed data can achieve similar timelines.

Does a Databricks POC require migrating data from Snowflake?

No. Databricks can query Snowflake tables directly through Unity Catalog federation. This POC kept Snowflake as the source of truth without data duplication or migration risk, reducing POC complexity.

What is Databricks AI/BI Genie?

Databricks AI/BI Genie is a natural language query interface that allows business users to ask questions in plain English and receive SQL-generated answers. POCs test whether Genie can eliminate the need for users to write queries or understand database structures.

Can a self-service analytics POC run alongside existing Power BI dashboards?

Yes. This POC kept existing Power BI dashboards operational while testing Databricks AI/BI Genie capabilities. Self-service analytics POCs can validate new approaches without disrupting functional BI infrastructure.

What does a self-service analytics POC prove?

A self-service analytics POC validates whether natural language capabilities can reduce data analyst dependency. The POC tests whether business users can ask follow-up questions directly and get immediate answers through conversational interfaces operating on governed datasets.

Share